Toward perceptually-consistent stereo

Stereo is the estimation of depth from two views of the same scene. There are two types of depth cues in a stereo image pair: matching and occlusion. Matching refers to regions of the scene that are visible to both views, and occlusion refers to regions that are visible in one view but invisible in the other due to a nearby occluder. Vision science has shown that both types of information are used for depth estimation early in the visual cortex, and human observers can perceive depth even when matching cues are absent or very weak, a capability that remains absent from most computer vision stereo systems.

Stereo viewer

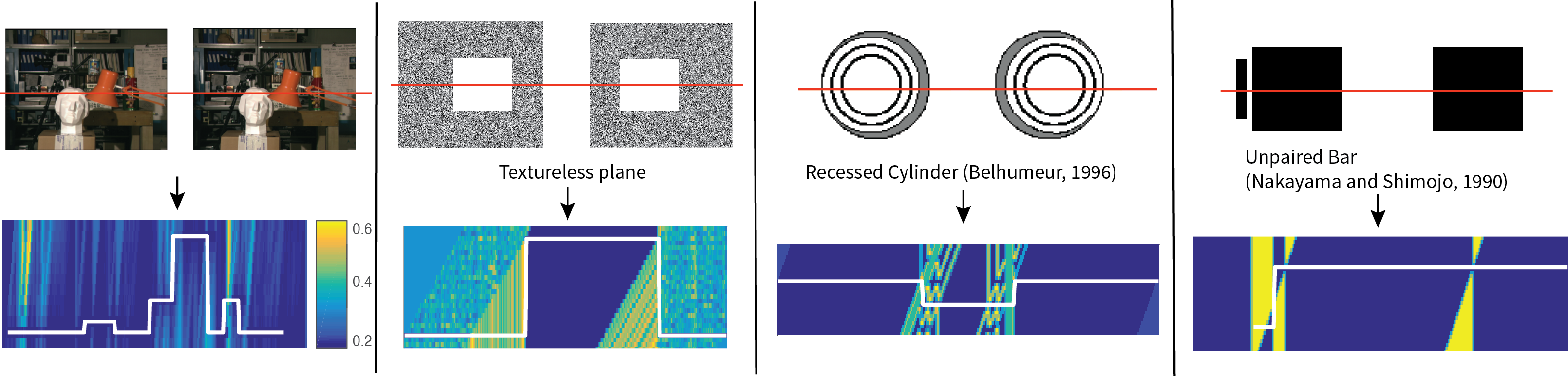

In our ICCV 2017 paper below, we collected a perceptual dataset of twelve stimuli, most of which were invented by vision scientists to study human stereopsis. Check out our stereoscope viewer for the stereo images described in the papers, including the ones in the image above.

Toward perceptually-consistent stereo: A scanline study

We show that many state-of-the-art stereo algorithms fail in perceptual stimuli with missing matching cues because they ignore the occlusion cue. We design an energy function that properly combines matching cue and occlusion cue, and a dynamic programming algorithm to obtain the exact global mininmum of the energy function on a scanline. We show that global minimum matches human perception in most stimuli

Toward perceptually-consistent stereo: A scanline study.Jialiang Wang, Daniel Glasner, and Todd Zickler

In Proc. International Conference on Computer Vision (ICCV), 2017. [Poster]

A dynamic programming algorithm for perceptually-consistent stereo

A more detailed description of the dynamic programming algorithm in our ICCV'17 paper.

The perceptual dataset can be downloaded here.

Local Detection of Stereo Occlusion Boundaries

In this paper, we introduce a taxonomy of local occlusion boundary signatures among patterns of stereo matching scores, categorized by the levels of textures in the nearby foreground and background surfaces. This motivates the investigation of detectors for stereo occlusion boundaries in different types of scenes. Based on this motivation, we design a detector using a simple feedforward network with relatively small receptive fields. We show that the local detector produces better boundaries than many other stereo methods, even without incorporating explicit stereo matching, top-down contextual cues, or single-image boundary cues based on texture and intensity.

Local Detection of Stereo Occlusion Boundaries.

Jialiang Wang and Todd Zickler,

In Proc. Computer Vision and Pattern Recognition (CVPR), 2019.

[Poster]

Labelled Middlebury occlusion boundaries. Download here.

Labelled Sintel occlusion boundaries (only first frame in each sequence). Download here.