Experimental Results

Captured Images: These are images of various objects captured with a Canon EOS 40D in a lab setting, under a directional light source with a chrome sphere in the scene that was used to determine the ground truth lighting direction. The 99th percentile intensity value in each image was assumed to correspond to the lighting intensity x albedo and used for normalization.

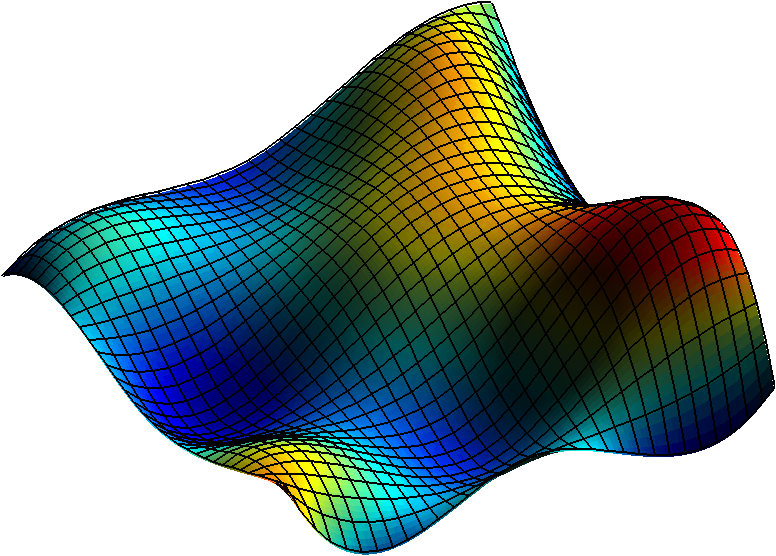

Synthetic Images: These are images of random surfaces, constructed by generating random samples of depth values from a Gaussian distribution on a 5x5 grid and then interpolating to 128x128 surfaces, rendered synthetically under horizontal directional lighting with an elevation angle of 60°. We also show results for cases when these synthetic images are corrupted by additive white Gaussian noise and by specular reflection.

Baseline Methods For comparison: The baseline SFS algorithms used for comparison includes:

- Polynomial Shape From Shading: This is the algorithm described in [1].

- Cross Scale Minimization with Shape Prior: This algorithm uses the cross-scale minimization of the error with respect to observed intensities, that is also employed by the SIRFS algorithm [2]. Note that for these results, we assume that the illuminant and reflectance are known (i.e., we do not use the light estimation and intrinsic image decomposition components of the SIRFS algorithm), and we do NOT use contour information (which is available for real image sets). For the shape prior proposed in [2], we used the parameters provided by the authors of [2] that were trained on the MIT intrinsic image dataset— we did run experiments with a prior trained on the synthetic surfaces but found that this did not improve performance.

References

[1] A. Ecker and A. D. Jepson, “Polynomial shape from shading,” in Proc. CVPR, 2010.

[2] J.T. Barron and J. Malik, “Shape, Illumination, and Reflectance from Shading”, Technical Report, EECS, UC Berkeley, 2013.